Model Compression Strategies: How to Make AI Models Smaller, Faster, and More Deployable

By Sagar Shankaran, Founder of CallSphere

Model Compression Strategies: How to Make AI Models Smaller, Faster, and More Deployable

Key takeaways

Model Compression Strategies: How to Make AI Models Smaller, Faster, and More Deployable

Artificial intelligence is advancing rapidly, but bigger models are not always better for real-world deployment. In production environments, teams often need models that are not just accurate, but also efficient, cost-effective, and fast enough to run at scale.

That is where model compression becomes essential.

Model compression strategies help reduce the size and computational demands of machine learning models while preserving as much performance as possible. For organizations building generative AI systems, edge AI applications, or large-scale inference pipelines, compression is often the difference between a promising prototype and a deployable solution.

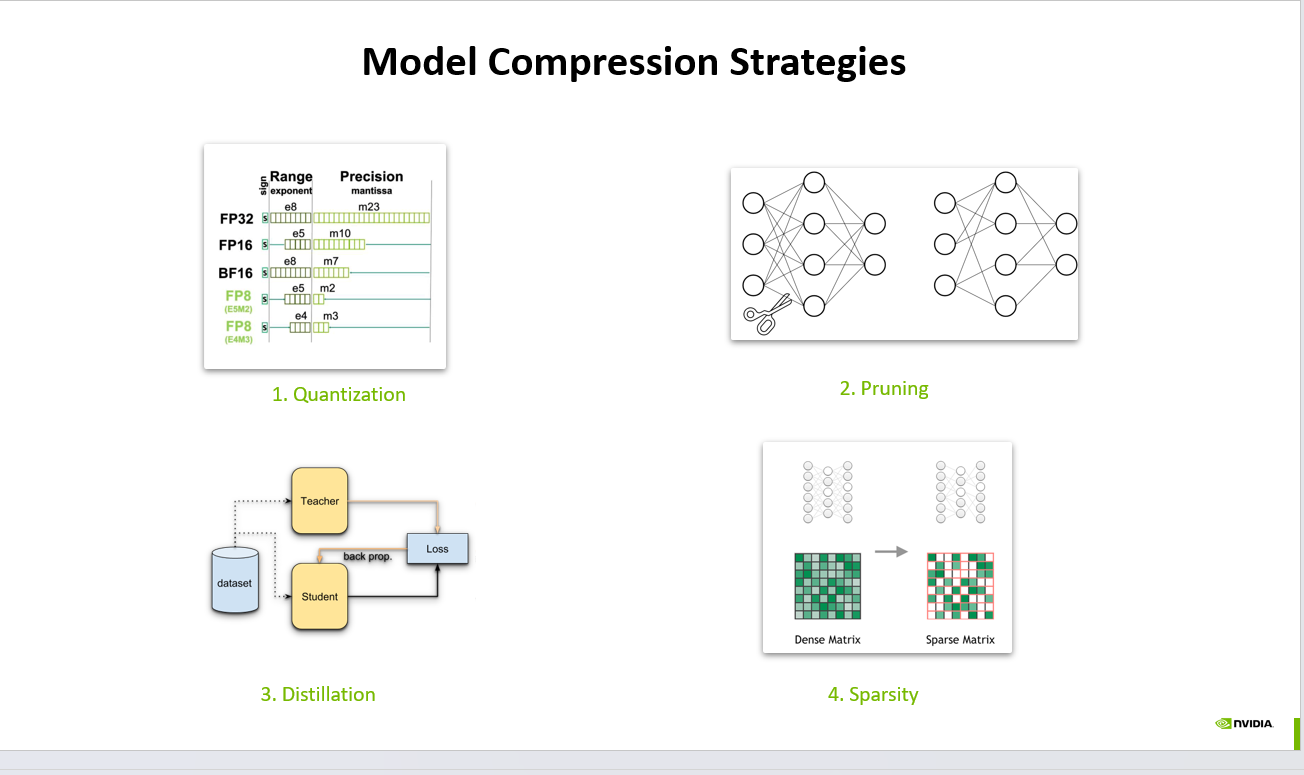

In this article, we will break down four core model compression strategies shown in the visual above: quantization, pruning, distillation, and sparsity.

Why Model Compression Matters

Modern AI systems are powerful, but they are also expensive to train, store, and serve. Large models can introduce challenges such as:

High inference latency

Increased memory consumption

Greater infrastructure cost

Deployment limitations on mobile, edge, and embedded devices

Higher energy usage in production AI systems

Model compression addresses these issues by making models more efficient without requiring a complete redesign of the architecture.

1. Quantization

Quantization reduces the numerical precision used to represent model weights and activations.

For example, instead of using 32-bit floating point values such as FP32, a model may be converted to FP16, BF16, or even lower precision formats like FP8 or INT8. This reduces memory footprint and can significantly improve inference speed on hardware that supports lower-precision arithmetic.

Benefits of quantization

Smaller model size

Faster inference

Lower memory bandwidth requirements

Better hardware efficiency

Trade-offs of quantization

Possible drop in accuracy if not calibrated correctly

Some layers may be more sensitive to low precision than others

Try Live Demo →Try Live →Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

Hardware support varies across deployment environments

Quantization is one of the most practical and widely adopted optimization techniques in modern machine learning deployment, especially for LLMs, computer vision models, and edge AI systems.

2. Pruning

Pruning removes parameters, neurons, channels, or connections that contribute less to the model’s final output.

The goal is to eliminate redundancy from the network. In many neural networks, a large number of weights have minimal impact on performance. By removing these less important connections, we can reduce complexity and improve efficiency.

Common pruning approaches

Unstructured pruning, where individual weights are removed

Structured pruning, where entire filters, channels, or layers are removed

Magnitude-based pruning, where smaller weights are cut first

Benefits of pruning

Reduced parameter count

Lower storage requirements

Potential speedups in optimized runtimes

Better suitability for constrained deployment environments

Trade-offs of pruning

Aggressive pruning can hurt model quality

Some pruning methods reduce size without delivering real hardware speed gains

Fine-tuning is often needed after pruning

Pruning is especially valuable when teams want to slim down over-parameterized models while preserving the original model design as much as possible.

3. Distillation

Knowledge distillation transfers knowledge from a larger, more capable teacher model to a smaller student model.

Instead of training the student model only on hard labels, distillation lets the smaller model learn from the probability distributions or intermediate representations produced by the teacher. This often helps the student model achieve stronger performance than it would through standard training alone.

Why distillation works

The teacher captures richer patterns in the data. By learning from those softer signals, the student model can generalize better while remaining much lighter and faster.

Benefits of distillation

Stronger performance in compact models

Better speed-to-accuracy trade-off

Useful for production deployment of smaller language models

Helps make large model behavior more portable

Trade-offs of distillation

Requires a strong teacher model

Adds complexity to the training pipeline

Student performance still depends on architecture choice and distillation setup

Distillation is one of the most important strategies for production-grade generative AI systems, especially when teams want the benefits of a large model in a smaller serving footprint.

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

4. Sparsity

Sparsity refers to increasing the number of zero-valued parameters in a model or matrix representation.

A sparse model contains many weights that are effectively inactive. If the hardware and software stack can exploit sparse computation efficiently, sparsity can reduce storage, memory movement, and compute cost.

Benefits of sparsity

Lower effective compute requirements

Reduced memory usage

Improved efficiency in specialized systems

Strong synergy with pruning techniques

Trade-offs of sparsity

Sparse models do not always run faster on general-purpose hardware

Performance gains depend heavily on compiler and accelerator support

Implementation benefits can be uneven across frameworks

Sparsity becomes especially powerful when paired with the right deployment infrastructure, such as optimized inference engines or hardware accelerators designed for sparse operations.

Which Model Compression Strategy Is Best?

There is no single best model compression technique. The right choice depends on your goals:

Choose quantization when you need fast wins in inference speed and memory savings

Choose pruning when your model has obvious redundancy and you want to reduce parameter count

Choose distillation when you want a smaller model that retains much of a larger model’s intelligence

Choose sparsity when your deployment stack can fully leverage sparse computation

In practice, the best results often come from combining multiple compression strategies. For example, teams may distill a model first, then quantize it for deployment, and apply pruning or sparsity-aware optimization where supported.

Model Compression in the Generative AI Era

As generative AI adoption grows, model compression is becoming a strategic advantage. Enterprises want AI systems that are:

Faster to serve

Cheaper to operate

Easier to scale

Portable across cloud, edge, and on-device environments

More sustainable from a compute and energy perspective

That makes model optimization a critical part of the AI lifecycle, not just an optional research exercise.

Whether you are deploying transformers, vision models, recommendation systems, or multimodal architectures, compression techniques can help bridge the gap between state-of-the-art performance and real-world usability.

Final Thoughts

Model compression is not just about shrinking models. It is about building AI systems that are practical, efficient, and ready for production.

Quantization, pruning, distillation, and sparsity each offer distinct benefits. Understanding when and how to use them can dramatically improve deployment efficiency while preserving the value of your machine learning models.

As AI systems continue to scale, the ability to optimize models for latency, cost, and portability will become a core skill for every AI engineer, ML researcher, and generative AI builder.

If you are working on machine learning infrastructure, LLM optimization, or edge AI deployment, model compression deserves a central place in your playbook.

#AI #ArtificialIntelligence #MachineLearning #DeepLearning #ModelCompression #Quantization #Pruning #KnowledgeDistillation #Sparsity #LLM #GenerativeAI #EdgeAI #MLOps #InferenceOptimization #AIInfrastructure #ModelOptimization

Written by

Sagar Shankaran· Founder, CallSphere

Sagar Shankaran is the founder of CallSphere, where he builds production AI voice and chat agents deployed across healthcare, hospitality, real estate, and home services. He writes about agentic AI, LLM engineering, and shipping voice agents that handle real calls in production.

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.