Unlocking the Potential of LLM Pretraining with Self-Supervised Learning

Understanding LLM Pretraining: The Power of Self-Supervised Learning

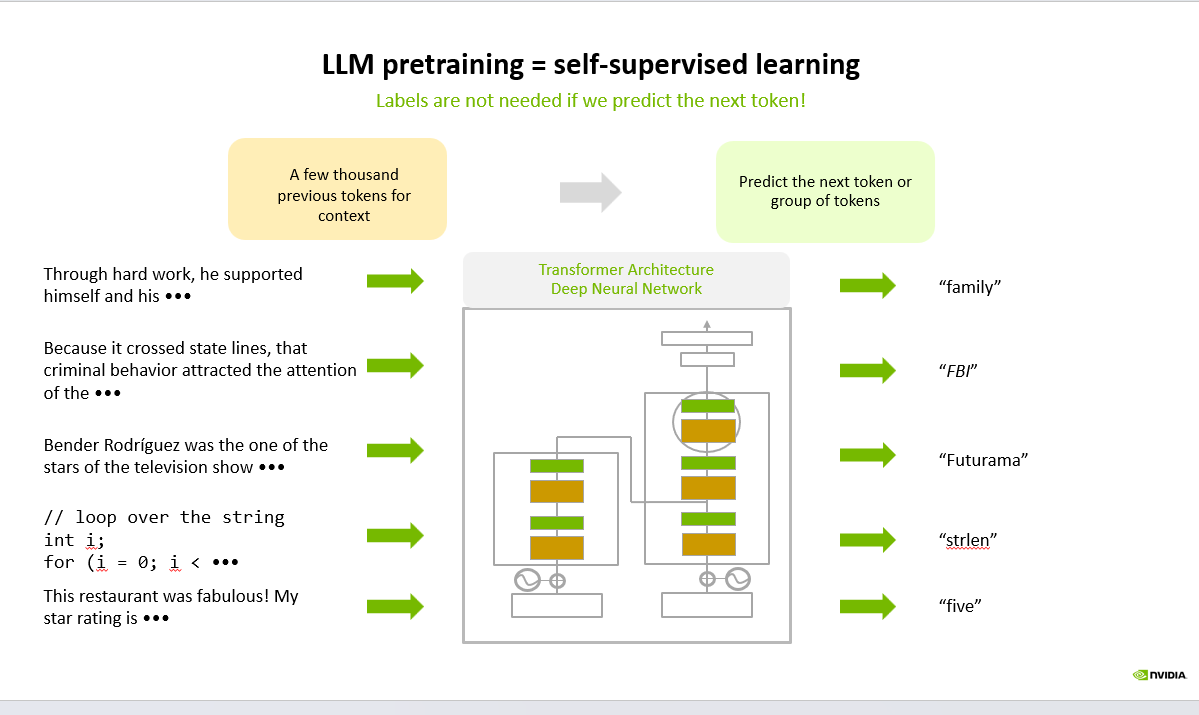

Large Language Models (LLMs) are built on a fundamentally different training paradigm compared to traditional machine learning systems. Instead of relying on manually labeled datasets, they leverage self-supervised learning—a method where the data itself provides the learning signal.

At the core of this approach is a simple objective: predict the next token in a sequence.

Given a partial sentence, the model learns to infer what comes next based on patterns observed across vast amounts of text. For example:

“Through hard work, he supported himself and his …” → family

Try Live Demo →Try Live →Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

“Because it crossed state lines, the case was handled by the …” → FBI

“Bender Rodríguez is a character from …” → Futurama

flowchart LR IN(["Input prompt"]) subgraph PRE["Pre processing"] TOK["Tokenize"] EMB["Embed"] end subgraph CORE["Model Core"] ATTN["Self attention layers"] MLP["Feed forward layers"] end subgraph POST["Post processing"] SAMP["Sampling"] DETOK["Detokenize"] end OUT(["Generated text"]) IN --> TOK --> EMB --> ATTN --> MLP --> SAMP --> DETOK --> OUT style IN fill:#f1f5f9,stroke:#64748b,color:#0f172a style CORE fill:#ede9fe,stroke:#7c3aed,color:#1e1b4b style OUT fill:#059669,stroke:#047857,color:#fff

Each prediction task becomes a training signal, eliminating the need for explicit human annotations.

Why this approach matters

First, it enables massive scalability. Since the internet and enterprise data sources contain enormous volumes of unlabeled text, models can be trained on diverse and rich datasets without costly labeling processes.

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Second, it leads to strong generalization. By learning language patterns, context, and relationships across domains, LLMs develop capabilities that transfer across tasks such as question answering, summarization, and code generation.

Third, it forms the foundation for downstream alignment. Techniques like instruction tuning and reinforcement learning build on this pretrained base to make models more useful and aligned with human intent.

The bigger picture

Self-supervised pretraining is not just an optimization trick; it is the reason modern AI systems can understand and generate human-like language at scale. By transforming raw text into structured knowledge through prediction, LLMs effectively learn how language—and to some extent, reasoning—works.

As AI systems continue to evolve, this paradigm remains central to building more capable, adaptable, and efficient models.

#AI #MachineLearning #LLM #GenerativeAI #DeepLearning #ArtificialIntelligence #DataScience #NLP #TechInnovation #AIEngineering

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.