Evaluating AI Pipelines: From LLMs to Real-World Impact

Evaluating AI Pipelines: From LLMs to Real-World Impact

The rapid rise of Large Language Models (LLMs) has shifted the conversation from “Can we build AI?” to “How do we evaluate AI effectively?”

Whether you're working with Retrieval-Augmented Generation (RAG), fine-tuned models, or enterprise chatbots, evaluation is no longer optional—it’s a core part of building reliable AI systems.

Why AI Evaluation Matters

In traditional software, correctness is binary—you either pass tests or you don’t. AI systems are fundamentally different. Outputs are probabilistic, context-dependent, and often subjective.

Without proper evaluation:

Hallucinations go unnoticed

Retrieval quality degrades silently

Model updates break existing workflows

User trust erodes

Evaluation is your guardrail.

A Modern AI Pipeline (What Are We Evaluating?)

A typical enterprise AI pipeline consists of multiple interconnected components:

flowchart LR

PR(["PR opened"])

UNIT["Unit tests"]

EVAL["Eval harness<br/>PromptFoo or Braintrust"]

GOLD[("Golden set<br/>200 tagged cases")]

JUDGE["LLM as judge<br/>plus regex graders"]

SCORE["Aggregate score<br/>and per slice"]

GATE{"Score regress<br/>more than 2 percent?"}

BLOCK(["Block merge"])

MERGE(["Merge to main"])

PR --> UNIT --> EVAL --> GOLD --> JUDGE --> SCORE --> GATE

GATE -->|Yes| BLOCK

GATE -->|No| MERGE

style EVAL fill:#4f46e5,stroke:#4338ca,color:#fff

style GATE fill:#f59e0b,stroke:#d97706,color:#1f2937

style BLOCK fill:#dc2626,stroke:#b91c1c,color:#fff

style MERGE fill:#059669,stroke:#047857,color:#fff

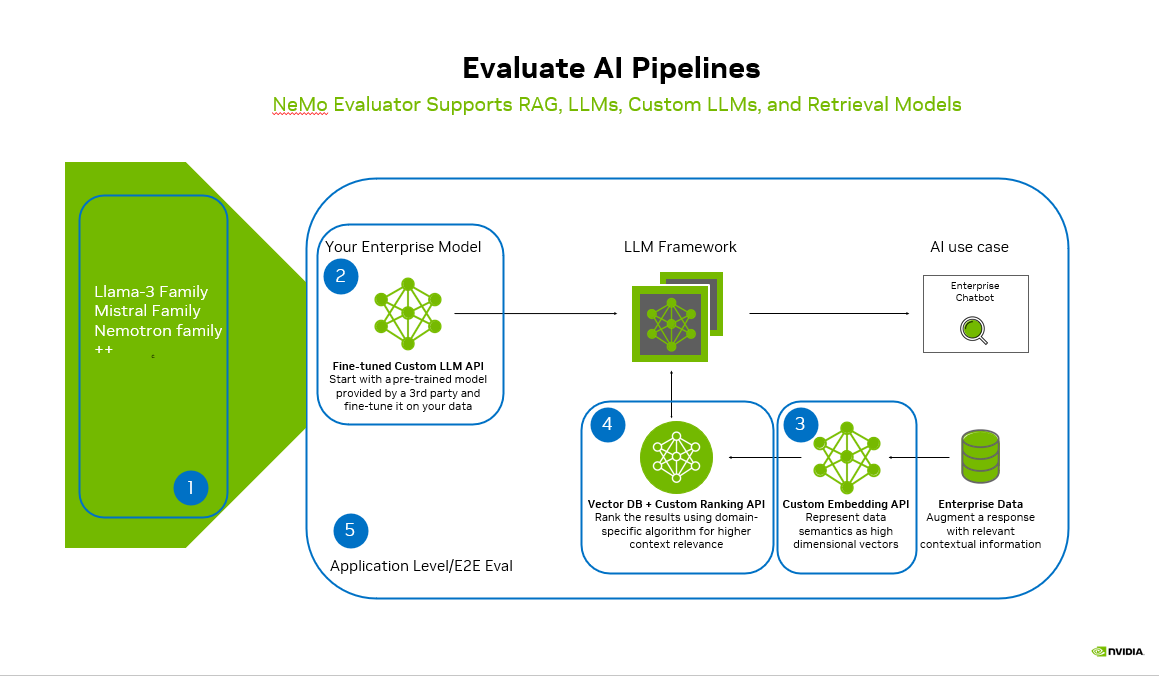

Foundation Models

(e.g., Llama, Mistral, Nemotron families)Custom / Fine-tuned LLMs

Adapting base models to domain-specific dataEmbedding Models

Converting text into high-dimensional vectorsTry Live Demo →Try Live →Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

Vector Databases + Ranking

Retrieving and re-ranking relevant contextApplication Layer

Chatbots, copilots, or enterprise workflows

Each layer introduces its own failure modes—and must be evaluated independently and end-to-end.

Key Evaluation Layers

1. Model-Level Evaluation

Accuracy on domain-specific tasks

Hallucination rate

Instruction-following capability

Latency and cost

2. Retrieval Evaluation (RAG Systems)

Recall@K (Did we retrieve the right documents?)

Precision (How relevant are retrieved chunks?)

Context quality

3. Embedding Evaluation

Semantic similarity performance

Clustering quality

Drift over time

4. Ranking Evaluation

Relevance scoring effectiveness

Context ordering impact on LLM output

5. End-to-End Evaluation

Final answer correctness

Groundedness (Is the answer supported by retrieved data?)

User satisfaction

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Common Pitfalls

Evaluating only the LLM, ignoring retrieval

Relying solely on human evaluation (not scalable)

No regression testing after model updates

Ignoring data quality in embeddings

Best Practices

Combine automated + human evaluation

Build golden datasets for benchmarking

Track metrics across every pipeline stage

Use A/B testing for model changes

Continuously monitor production outputs

The Future: Continuous AI Evaluation

Evaluation is moving toward:

Real-time monitoring

Feedback-driven learning loops

Automated guardrails and policy enforcement

Tools like NVIDIA NeMo Evaluator are making it easier to evaluate across the entire AI pipeline—from embeddings to application-level responses.

Final Thoughts

Building AI is no longer the hardest part.

Evaluating, monitoring, and improving it continuously—that’s where real engineering begins.

If you're working on LLM systems today, ask yourself:

What part of your pipeline are you not evaluating yet?

#AI #MachineLearning #LLM #RAG #DataEngineering #MLOps #GenerativeAI

## Evaluating AI Pipelines: From LLMs to Real-World Impact — operator perspective Behind Evaluating AI Pipelines: From LLMs to Real-World Impact sits a smaller, more useful question: which production constraint just got cheaper to solve — first-token latency, language coverage, structured outputs, or tool-call reliability? On the CallSphere side, the practical filter is simple: would this make a 90-second appointment-booking call faster, cheaper, or more reliable? If the answer is "maybe in a benchmark," it doesn't ship to production. ## Where a junior engineer should actually start If you're new to agentic AI and want to be useful in three weeks, skip the framework war and start with one stack: the OpenAI Agents SDK. Build a single-agent app that does one thing well (book an appointment, qualify a lead, escalate a complaint). Then add a second specialist agent with an explicit handoff — the receiving agent gets a structured payload (intent, entities, prior tool results), not a transcript. That's the moment the abstractions click. From there, the next two skills that compound are evals (write the regression case the moment you find a bug, and refuse to merge anything that fails the suite) and observability (log the tool-call graph, not just the final answer). Frameworks come and go; those two habits transfer. Once you've shipped that first multi-agent app end-to-end, the rest of the agentic AI literature reads differently — you can tell which papers are solving real production problems and which are solving demo problems. ## FAQs **Q: Why isn't evaluating AI Pipelines an automatic upgrade for a live call agent?** A: Most of the time it doesn't, and that's the right starting assumption. The relevant test is whether it improves at least one of: p95 first-token latency, tool-call argument accuracy on noisy inputs, multi-turn handoff stability, or per-session cost. Real Estate deployments run 10 specialist agents with 30 tools, including vision-on-photos for listing intake and follow-up. **Q: How do you sanity-check evaluating AI Pipelines before pinning the model version?** A: The eval gate is unsentimental — a regression suite that simulates real call traffic (noisy ASR, partial inputs, tool-call timeouts) measures four numbers, and a candidate has to win on three of four without losing badly on the fourth. Anything else is treated as a blog post, not a stack change. **Q: Where does evaluating AI Pipelines fit in CallSphere's 37-agent setup?** A: In a CallSphere deployment, new model and API capabilities land first in the post-call analytics pipeline (lower stakes, async, easy to roll back) and only later in the live realtime path. Today the verticals most likely to absorb new capability first are Salon, which already run the largest share of production traffic. ## See it live Want to see after-hours escalation agents handle real traffic? Walk through https://escalation.callsphere.tech or grab 20 minutes with the founder: https://calendly.com/sagar-callsphere/new-meeting.Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.