How Do You Really Know If Your LLM Is Good Enough? A Guide to Controlled Evaluation Metrics

How Do You Really Know If Your LLM Is Good Enough? A Guide to Controlled Evaluation Metrics

If you're building, fine-tuning, or deploying large language models, there's one question that should keep you up at night: How do you measure what "good" actually looks like?

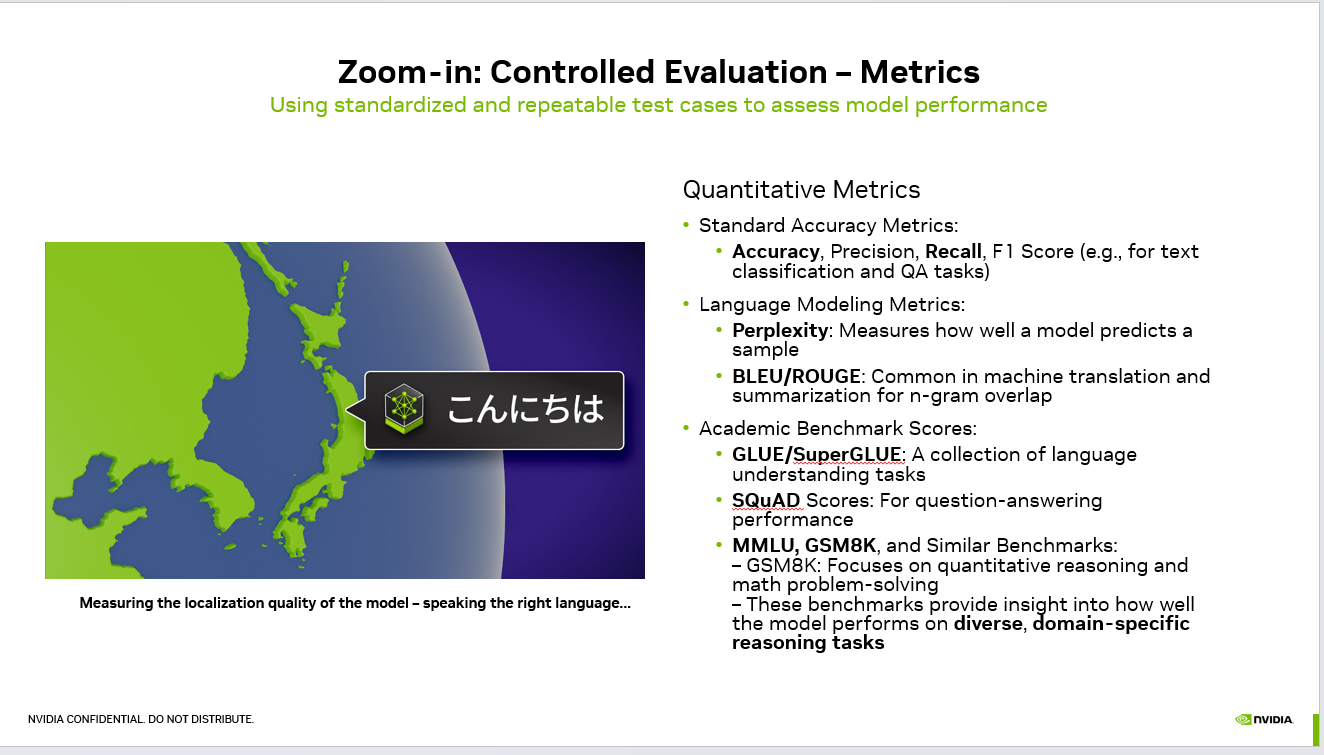

Vibes-based evaluation doesn't scale. Neither does cherry-picking impressive outputs for a demo. What you need is controlled evaluation — standardized, repeatable test cases that give you an honest picture of model performance.

Here's a breakdown of the quantitative metrics that matter, and when to use each one.

Standard Accuracy Metrics

These are your bread and butter for classification and question-answering tasks:

Accuracy tells you the percentage of correct predictions overall. Simple, but can be misleading on imbalanced datasets.

Precision answers: "Of everything the model flagged as positive, how much was actually positive?" Critical when false positives are expensive — think spam detection or medical diagnosis.

Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

Recall answers the inverse: "Of all the actual positives, how many did the model catch?" This is your go-to when missing a true positive is costly.

F1 Score balances precision and recall into a single number. When you can't afford to optimize one at the expense of the other, F1 is your north star.

Language Modeling Metrics

When you're evaluating the model's core language capabilities:

flowchart LR

PR(["PR opened"])

UNIT["Unit tests"]

EVAL["Eval harness<br/>PromptFoo or Braintrust"]

GOLD[("Golden set<br/>200 tagged cases")]

JUDGE["LLM as judge<br/>plus regex graders"]

SCORE["Aggregate score<br/>and per slice"]

GATE{"Score regress<br/>more than 2 percent?"}

BLOCK(["Block merge"])

MERGE(["Merge to main"])

PR --> UNIT --> EVAL --> GOLD --> JUDGE --> SCORE --> GATE

GATE -->|Yes| BLOCK

GATE -->|No| MERGE

style EVAL fill:#4f46e5,stroke:#4338ca,color:#fff

style GATE fill:#f59e0b,stroke:#d97706,color:#1f2937

style BLOCK fill:#dc2626,stroke:#b91c1c,color:#fff

style MERGE fill:#059669,stroke:#047857,color:#fff

Perplexity measures how well a model predicts a sample of text. Lower perplexity means the model is less "surprised" by the data — a strong indicator of language fluency. It's particularly useful during pre-training and fine-tuning to track whether the model is actually learning.

BLEU and ROUGE are the workhorses of machine translation and summarization evaluation. Both measure n-gram overlap between generated and reference text, but from different angles — BLEU focuses on precision (is the generated text accurate?) while ROUGE focuses on recall (did it capture the key information?).

Academic Benchmarks

These standardized benchmarks let you compare your model against the field:

GLUE/SuperGLUE — Collections of language understanding tasks that test everything from sentiment analysis to textual entailment. SuperGLUE was introduced when models started saturating the original GLUE benchmark.

SQuAD — The Stanford Question Answering Dataset remains a gold standard for evaluating reading comprehension and extractive QA capabilities.

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

MMLU — Massive Multitask Language Understanding tests knowledge across 57 subjects, from STEM to humanities. It's one of the best proxies for general knowledge and reasoning.

GSM8K — Focused specifically on grade-school math word problems, this benchmark reveals how well your model handles quantitative reasoning and multi-step problem-solving.

The Bigger Picture

No single metric tells the whole story. A model might ace MMLU but hallucinate on domain-specific queries. It might have low perplexity but produce biased outputs. It might crush GSM8K but fail at real-world math applied to your use case.

The key is building an evaluation suite tailored to your deployment context — combining standard metrics with domain-specific benchmarks and qualitative human evaluation.

And don't forget localization. If your model serves a global audience, you need to evaluate whether it performs consistently across languages and cultural contexts, not just in English.

The models that win in production aren't the ones with the best benchmark scores. They're the ones that were evaluated honestly.

What evaluation metrics have you found most valuable for your LLM projects? I'd love to hear what's worked (and what hasn't) in the comments.

#LLM #AI #MachineLearning #ModelEvaluation #NLP #DeepLearning #ArtificialIntelligence #MLOps

## How Do You Really Know If Your LLM Is Good Enough? A Guide to Controlled Evaluation Metrics — operator perspective How Do You Really Know If Your LLM Is Good Enough? A Guide to Controlled Evaluation Metrics matters less for the headline than for what it forces operators to re-examine in their own stack — eval gates, fallback routing, and tool-call latency budgets. The CallSphere stack treats announcements as input to an evals queue, not a product roadmap. Production agents stay pinned; new releases earn their slot only after a regression suite confirms cost, latency, and tool-call reliability move the right way. ## Base model vs. production LLM stack — the gap that costs you uptime A base model is a checkpoint. A production LLM stack is a whole different artifact: eval gates that fail the build on regression, prompt caching that cuts repeated-system-prompt cost by 40-70%, structured outputs that prevent JSON drift on tool calls, fallback chains that route to a smaller-model retry when the primary times out, and request-side guardrails that cap tool calls per session before the loop spirals. CallSphere runs LLMs in tandem on purpose: `gpt-4o-realtime` for the live call (streaming audio in and out, tool calls inline) and `gpt-4o-mini` for post-call analytics (sentiment scoring, lead qualification, summary generation, and the lower-stakes async work that doesn't need realtime). That split is not a cost optimization — it's a reliability decision. Realtime is optimized for low-latency turn-taking; mini is optimized for cheap, deterministic batch scoring. Mixing them lets each do what it's good at without one regressing the other. The teams that struggle with LLMs in production almost always made the same mistake: they treated "the model" as a single dependency, instead of as a small portfolio of models, each pinned to a job, each behind its own eval suite, each with a documented fallback. ## FAQs **Q: Does how Do You Really Know If Your LLM Is Good Enough? A Guide to Controlled Evaluation Metrics actually move p95 latency or tool-call reliability?** A: Most of the time it doesn't, and that's the right starting assumption. The relevant test is whether it improves at least one of: p95 first-token latency, tool-call argument accuracy on noisy inputs, multi-turn handoff stability, or per-session cost. The CallSphere stack — Twilio + OpenAI Realtime + ElevenLabs + NestJS + Prisma + Postgres — is sized for fast turn-taking, not raw model size. **Q: What would have to be true before how Do You Really Know If Your LLM Is Good Enough? A Guide to Controlled Evaluation Metrics ships into production?** A: The eval gate is unsentimental — a regression suite that simulates real call traffic (noisy ASR, partial inputs, tool-call timeouts) measures four numbers, and a candidate has to win on three of four without losing badly on the fourth. Anything else is treated as a blog post, not a stack change. **Q: Which CallSphere vertical would benefit from how Do You Really Know If Your LLM Is Good Enough? A Guide to Controlled Evaluation Metrics first?** A: In a CallSphere deployment, new model and API capabilities land first in the post-call analytics pipeline (lower stakes, async, easy to roll back) and only later in the live realtime path. Today the verticals most likely to absorb new capability first are Healthcare and Sales, which already run the largest share of production traffic. ## See it live Want to see real estate agents handle real traffic? Walk through https://realestate.callsphere.tech or grab 20 minutes with the founder: https://calendly.com/sagar-callsphere/new-meeting.Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.