Standardized Test Cases to Assess AI Model Performance

Standardized Test Cases to Assess AI Model Performance

Standardized Test Cases to Assess AI Model Performance

Why Evaluation Matters

As AI systems move from demos to real products, subjective impressions are no longer enough. We need measurable, repeatable, and standardized testing to understand whether a model is actually improving. Controlled evaluation provides exactly that — structured test cases that objectively measure performance across different tasks and domains.

Instead of asking “Does the model feel smarter?”, controlled evaluation asks “Did the model get more correct answers on the same benchmark?”

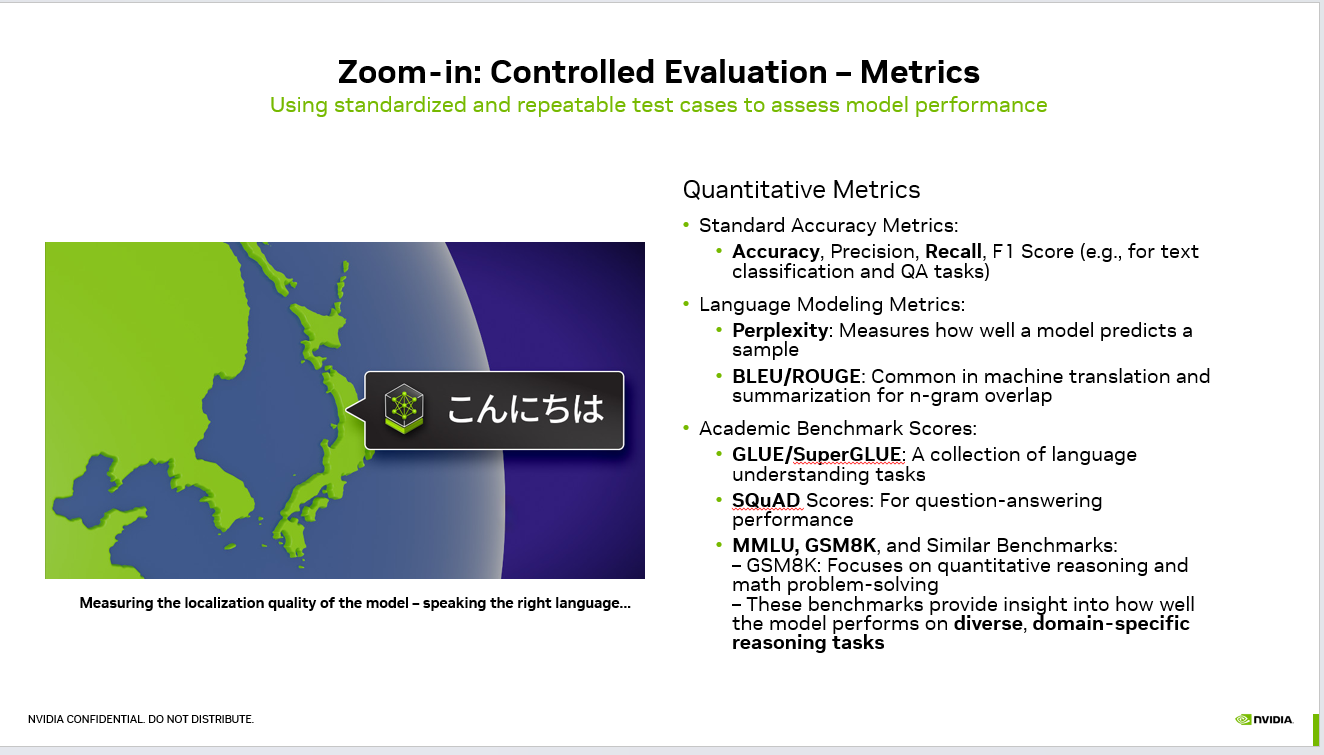

Core Quantitative Metrics

1. Accuracy Metrics

These are the most common metrics used in classification and question‑answering tasks:

flowchart LR

INPUT(["User intent"])

PARSE["Parse plus<br/>classify"]

PLAN["Plan and tool<br/>selection"]

AGENT["Agent loop<br/>LLM plus tools"]

GUARD{"Guardrails<br/>and policy"}

EXEC["Execute and<br/>verify result"]

OBS[("Trace and metrics")]

OUT(["Outcome plus<br/>next action"])

INPUT --> PARSE --> PLAN --> AGENT --> GUARD

GUARD -->|Pass| EXEC --> OUT

GUARD -->|Fail| AGENT

AGENT --> OBS

style AGENT fill:#4f46e5,stroke:#4338ca,color:#fff

style GUARD fill:#f59e0b,stroke:#d97706,color:#1f2937

style OBS fill:#ede9fe,stroke:#7c3aed,color:#1e1b4b

style OUT fill:#059669,stroke:#047857,color:#fff

Accuracy – Percentage of correct predictions

Precision – Correct positives among predicted positives

Recall – Correct positives among actual positives

F1 Score – Balance between precision and recall

They help evaluate reliability when the output must be strictly correct — like routing, classification, or intent detection.

Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

2. Language Modeling Metrics

Used when models generate text rather than select labels.

Perplexity

Measures how well a model predicts text. Lower perplexity means the model better understands language structure.

BLEU / ROUGE

Compare generated text with reference text by measuring overlap. Common in translation and summarization tasks.

3. Academic Benchmark Suites

Benchmarks evaluate deeper reasoning rather than surface correctness.

GLUE / SuperGLUE – General language understanding tasks

SQuAD – Question answering comprehension

MMLU – Multi‑domain knowledge and reasoning

GSM8K – Math reasoning and problem solving

These benchmarks reveal whether a model truly understands concepts or only imitates patterns.

What Controlled Evaluation Actually Tells You

Controlled evaluation answers three critical product questions:

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Is the model improving after a new training iteration?

Does performance hold across domains and languages?

Are we optimizing real capability or just changing style?

For example, a conversational AI might sound fluent while failing reasoning tests — benchmarks expose that gap immediately.

Practical Impact in Production AI

In production systems — customer support agents, copilots, or voice assistants — improvements must be measurable. Controlled evaluation prevents regression and enables safe iteration by:

Tracking performance over time

Comparing models objectively

Detecting silent failures

Validating localization quality

Without evaluation, scaling AI becomes guesswork.

Final Thought

AI progress should not be judged by how impressive a demo looks, but by how consistently it performs under the same conditions. Controlled evaluation transforms AI development from experimentation into engineering — measurable, reliable, and repeatable.

#LLM #AI #MachineLearning #ModelEvaluation #NLP #DeepLearning #ArtificialIntelligence #MLOps

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.