What is Controlled Evaluation for Large Language Models?

Assessing LLM Performance: Strategies to Evaluate and Improve Your App.

In today’s AI race, most teams optimize for impressive demos.

Very few optimize for measurable performance.

If you’re building AI-powered products, controlled evaluation is not optional — it’s your competitive advantage.

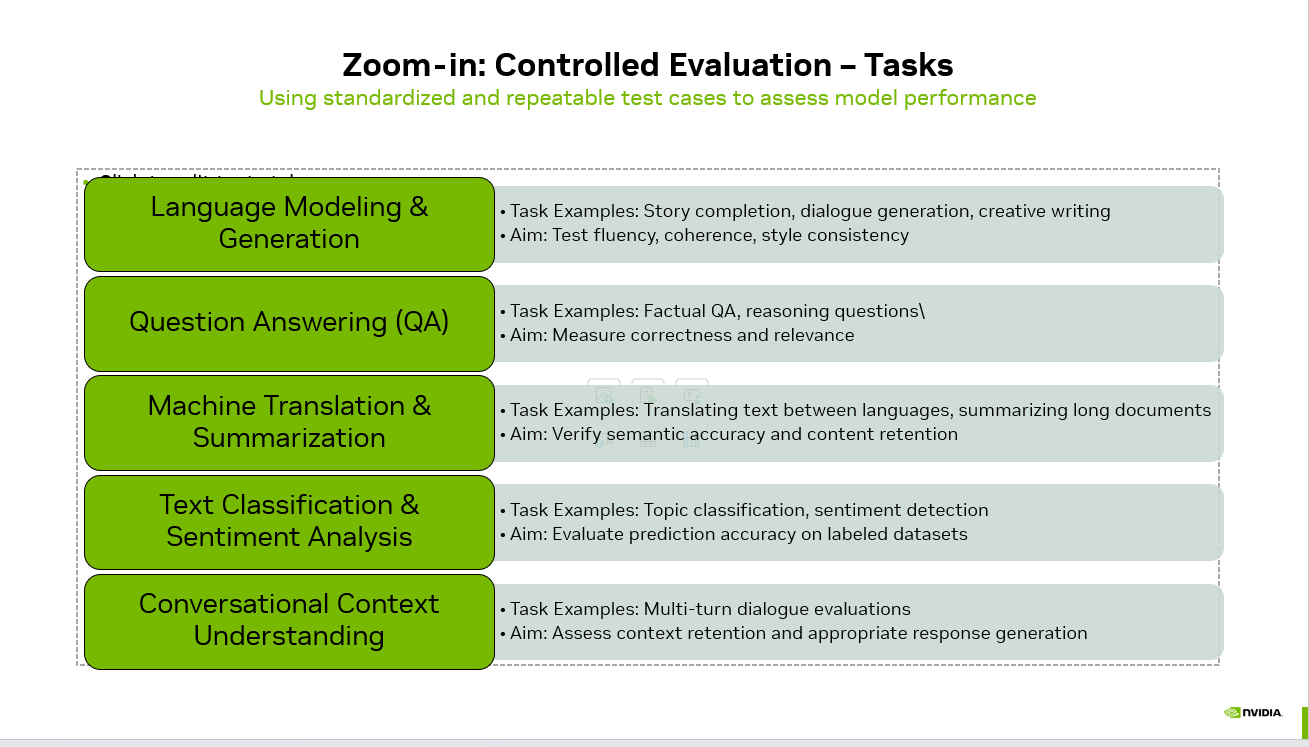

Controlled evaluation means using standardized, repeatable test cases to assess model performance across clearly defined tasks. Instead of relying on subjective judgment (“it sounds good”), you measure structured outcomes.

Let’s break down the core task categories every serious AI team should evaluate.

1️⃣ Language Modeling & Generation

Task Examples:

Story completion

Dialogue generation

Creative writing

What You’re Testing:

Fluency

Coherence

Style consistency

Creative generation often looks impressive in demos. But in production, you need consistency. Can the model maintain tone across 1,000 outputs? Does it drift stylistically? Does it hallucinate details?

Controlled prompts + scoring rubrics = measurable creativity.

2️⃣ Question Answering (QA)

Task Examples:

flowchart TD

START(["What is Controlled Evaluation for Large<br/>Language Models?"])

S0["1️⃣ Language Modeling and<br/>Generation"]

START --> S0

S1["2️⃣ Question Answering (QA)"]

S0 --> S1

S2["3️⃣ Machine Translation and<br/>Summarization"]

S1 --> S2

S3["4️⃣ Text Classification and<br/>Sentiment Analysis"]

S2 --> S3

S4["5️⃣ Conversational Context<br/>Understanding"]

S3 --> S4

DONE(["Key Takeaways"])

S4 --> DONE

style START fill:#4f46e5,stroke:#4338ca,color:#fff

style DONE fill:#059669,stroke:#047857,color:#fff

Factual question answering

Multi-step reasoning questions

What You’re Testing:

Correctness

Relevance

Logical consistency

This is where hallucinations become visible.

Benchmarking factual accuracy and reasoning depth under controlled inputs helps identify whether your system is reliable enough for customer-facing use cases.

3️⃣ Machine Translation & Summarization

Task Examples:

flowchart LR

IN(["Input prompt"])

subgraph PRE["Pre processing"]

TOK["Tokenize"]

EMB["Embed"]

end

subgraph CORE["Model Core"]

ATTN["Self attention layers"]

MLP["Feed forward layers"]

end

subgraph POST["Post processing"]

SAMP["Sampling"]

DETOK["Detokenize"]

end

OUT(["Generated text"])

IN --> TOK --> EMB --> ATTN --> MLP --> SAMP --> DETOK --> OUT

style IN fill:#f1f5f9,stroke:#64748b,color:#0f172a

style CORE fill:#ede9fe,stroke:#7c3aed,color:#1e1b4b

style OUT fill:#059669,stroke:#047857,color:#fff

Translating text between languages

See AI Voice Agents Handle Real Calls

Book a free demo or calculate how much you can save with AI voice automation.

Summarizing long-form documents

What You’re Testing:

Semantic accuracy

Content retention

Information compression quality

It’s easy for a model to sound fluent while subtly changing meaning. Evaluation frameworks ensure the output preserves intent and key details.

4️⃣ Text Classification & Sentiment Analysis

Task Examples:

Topic classification

Sentiment detection

What You’re Testing:

Prediction accuracy

Precision / recall

Robustness across edge cases

Here, LLMs can be compared against traditional ML baselines. Controlled datasets allow objective performance comparisons.

5️⃣ Conversational Context Understanding

Task Examples:

Multi-turn dialogue evaluation

Context carryover tests

What You’re Testing:

Context retention

Response appropriateness

Instruction adherence

This is critical for AI agents and enterprise assistants. Many systems perform well in single-turn prompts but degrade across longer interactions.

Why This Matters

Without controlled evaluation:

You can’t compare models objectively.

You can’t measure improvements.

You can’t justify production deployment decisions.

You can’t build trust with stakeholders.

With controlled evaluation:

You move from opinion to metrics.

From demo-driven to data-driven.

From experimentation to engineering discipline.

The future of AI development won’t be decided by who builds the flashiest demo.

It will be decided by who measures performance rigorously and improves systematically.

If you're building with LLMs in 2026, ask yourself:

👉 Do you have a structured evaluation pipeline — or just impressive screenshots?

#AI #LLM #ArtificialIntelligence #MachineLearning #AIEngineering #GenAI #ModelEvaluation #DataDriven #AIProductDevelopment

flowchart TD

HUB(("What is Controlled<br/>Evalu"))

HUB --> L0["1️⃣ Language Modeling and<br/>Generation"]

style L0 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

HUB --> L1["2️⃣ Question Answering (QA)"]

style L1 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

HUB --> L2["3️⃣ Machine Translation and<br/>Summarization"]

style L2 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

HUB --> L3["4️⃣ Text Classification and<br/>Sentiment Analysis"]

style L3 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

HUB --> L4["5️⃣ Conversational Context<br/>Understanding"]

style L4 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

style HUB fill:#4f46e5,stroke:#4338ca,color:#fff

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.