In-Context Learning (ICL): How Modern LLMs Learn Without Retraining

In-Context Learning (ICL): How Modern LLMs Learn Without Retraining

In-Context Learning (ICL): How Modern LLMs Learn Without Retraining

Large Language Models (LLMs) have transformed how we build intelligent systems. One of the most powerful capabilities behind their flexibility is In-Context Learning (ICL). Instead of retraining the model every time we want it to perform a new task, we can guide it using examples directly in the prompt.

What is In-Context Learning?

In-Context Learning refers to the ability of a language model to learn patterns from examples provided within the prompt itself, without updating the model’s parameters.

This means the model adapts its responses based on the examples you provide in the same input context.

For AI engineers, this is powerful because it allows rapid experimentation and task adaptation without expensive training pipelines.

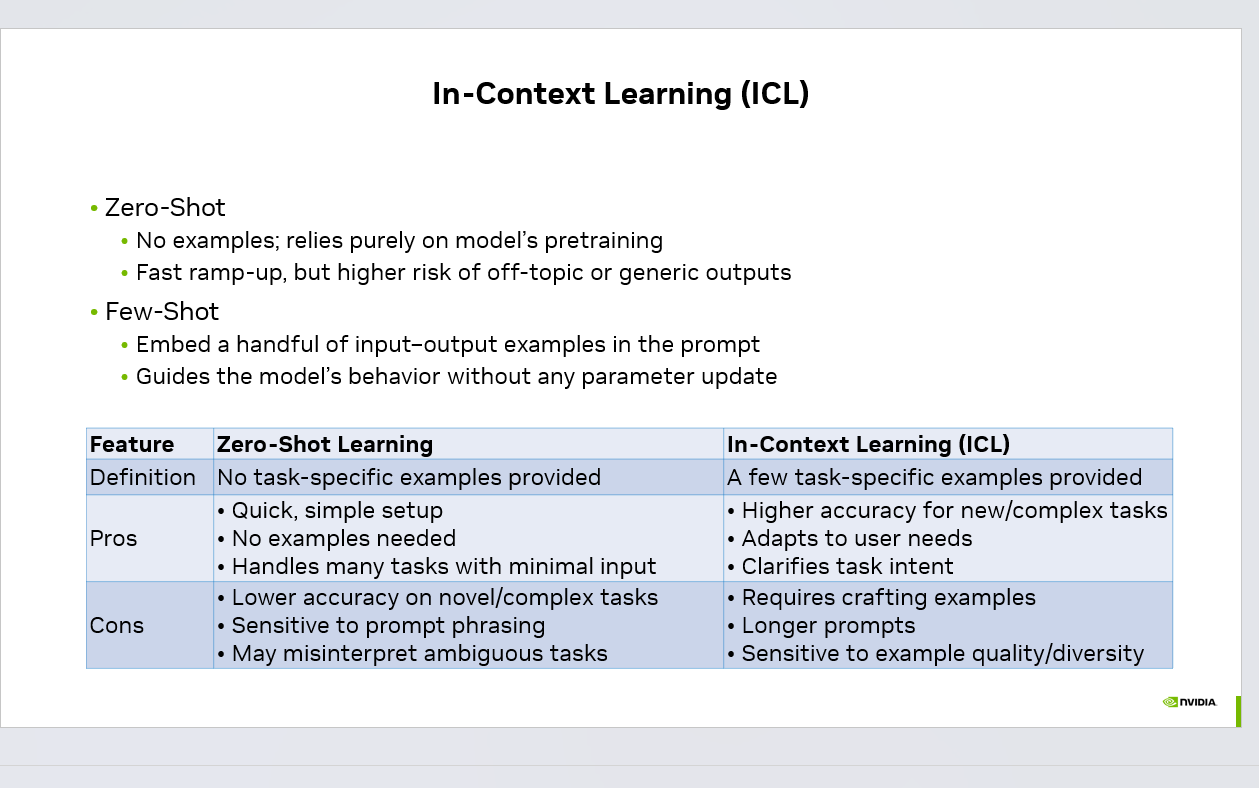

Zero-Shot Learning

Zero-shot learning is the simplest way to use an LLM.

flowchart LR

INPUT(["User intent"])

PARSE["Parse plus<br/>classify"]

PLAN["Plan and tool<br/>selection"]

AGENT["Agent loop<br/>LLM plus tools"]

GUARD{"Guardrails<br/>and policy"}

EXEC["Execute and<br/>verify result"]

OBS[("Trace and metrics")]

OUT(["Outcome plus<br/>next action"])

INPUT --> PARSE --> PLAN --> AGENT --> GUARD

GUARD -->|Pass| EXEC --> OUT

GUARD -->|Fail| AGENT

AGENT --> OBS

style AGENT fill:#4f46e5,stroke:#4338ca,color:#fff

style GUARD fill:#f59e0b,stroke:#d97706,color:#1f2937

style OBS fill:#ede9fe,stroke:#7c3aed,color:#1e1b4b

style OUT fill:#059669,stroke:#047857,color:#fff

Here, no examples are provided. The model relies entirely on its pretraining knowledge to understand and respond to the task.

Example:

Text: "This product is amazing."

Task: Classify sentiment

Advantages:

Quick and simple setup

No examples needed

Works well for common tasks

Limitations:

Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

Lower accuracy for complex tasks

Sensitive to prompt wording

May produce generic outputs

Few-Shot Learning

Few-shot learning improves performance by adding a few input-output examples in the prompt. These examples guide the model on how to behave.

Example:

Text: I love this movie

Sentiment: Positive

Text: This is the worst service ever

Sentiment: Negative

Text: The product quality is great

Sentiment:

Advantages:

Higher accuracy for complex tasks

Helps clarify task intent

Enables customized outputs

Limitations:

Requires carefully crafted examples

Longer prompts

Output quality depends on example diversity

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Why In-Context Learning Matters

ICL is one of the main reasons LLMs are so versatile. Instead of training a new model for every task, developers can simply guide the model with examples inside the prompt.

This enables:

Rapid prototyping of AI applications

Faster experimentation in prompt engineering

Reduced need for costly fine-tuning

Flexible task adaptation across domains

Many modern systems such as AI agents, chatbots, document analysis tools, and coding assistants rely heavily on in-context learning to improve response quality.

When to Use Each Approach

Use Zero-Shot when:

The task is simple

The model already understands the domain

Speed and simplicity matter

Use Few-Shot / In-Context Learning when:

The task is complex or ambiguous

You need structured outputs

You want more consistent responses

Final Thoughts

In-Context Learning is one of the most practical techniques in modern AI development. With just a few well-designed examples, we can guide powerful models to perform new tasks without retraining.

As LLM capabilities continue to evolve, prompt design and example selection will remain critical skills for AI engineers building real-world intelligent systems.

What are some interesting ways you’ve used in-context learning in your projects?

#AI #LLM #MachineLearning #PromptEngineering #GenerativeAI #ArtificialIntelligence

## In-Context Learning (ICL): How Modern LLMs Learn Without Retraining — operator perspective When teams move beyond in-Context Learning (ICL), one question shows up first: where does the agent loop actually end? In practice, the boundary is rarely the model — it is the contract between the orchestrator and the tools it calls. The teams that ship fastest treat in-context learning (icl) as an evals problem first and a modeling problem second. They write the failure cases into the regression set on day one, not after the first incident. ## Why this matters for AI voice + chat agents Agentic AI in a real call center is a different beast than a single-LLM chatbot. Instead of one model answering one prompt, you orchestrate a small team: a router that decides intent, specialists that own a vertical (booking, intake, billing, escalation), and tools that read and write to the same Postgres your CRM trusts. Hand-offs are where most production bugs hide — when Agent A passes context to Agent B, anything that isn't explicit in the message gets lost, and the user feels it as the agent "forgetting." That's why the systems that hold up under load are the ones with typed tool schemas, deterministic state stored outside the conversation, and a hard ceiling on tool calls per session. The cost story is just as important: a multi-agent loop can quietly burn 10x the tokens of a single-LLM design if you let it think out loud at every step. The fix isn't a smarter model, it's smaller agents, shorter prompts, cached system messages, and evals that fail the build when p95 latency or per-session cost regresses. CallSphere runs this pattern across 6 verticals in production, and the rule has held every time: the agent you can debug in five minutes will out-survive the agent that's "smarter" on a benchmark. ## FAQs **Q: What's the hardest part of running in-Context Learning (ICL) live?** A: Scaling comes from constraint, not capability. The deployments that hold up keep each agent narrow, cap tool calls per turn, cache the system prompt, and pin a smaller model for routing while reserving the larger model for synthesis. CallSphere's stack — 37 agents · 90+ tools · 115+ DB tables · 6 verticals live — is sized that way on purpose. **Q: How do you evaluate in-Context Learning (ICL) before shipping?** A: Hard ceilings beat heuristics. A maximum step count, an idempotency key on every tool call, and a fallback to a deterministic script when confidence drops below a threshold are what keep the loop bounded. Evals that simulate noisy inputs catch the rest before they reach a real caller. **Q: Which CallSphere verticals already rely on in-Context Learning (ICL)?** A: It's already in production. Today CallSphere runs this pattern in Healthcare and After-Hours Escalation, alongside the other live verticals (Healthcare, Real Estate, Salon, Sales, After-Hours Escalation, IT Helpdesk). The same orchestrator code path serves voice and chat — the difference is the tool set the router exposes. ## See it live Want to see sales agents handle real traffic? Spin up a walkthrough at https://sales.callsphere.tech or grab 20 minutes on the calendar: https://calendly.com/sagar-callsphere/new-meeting.Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.