How Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know

How Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know

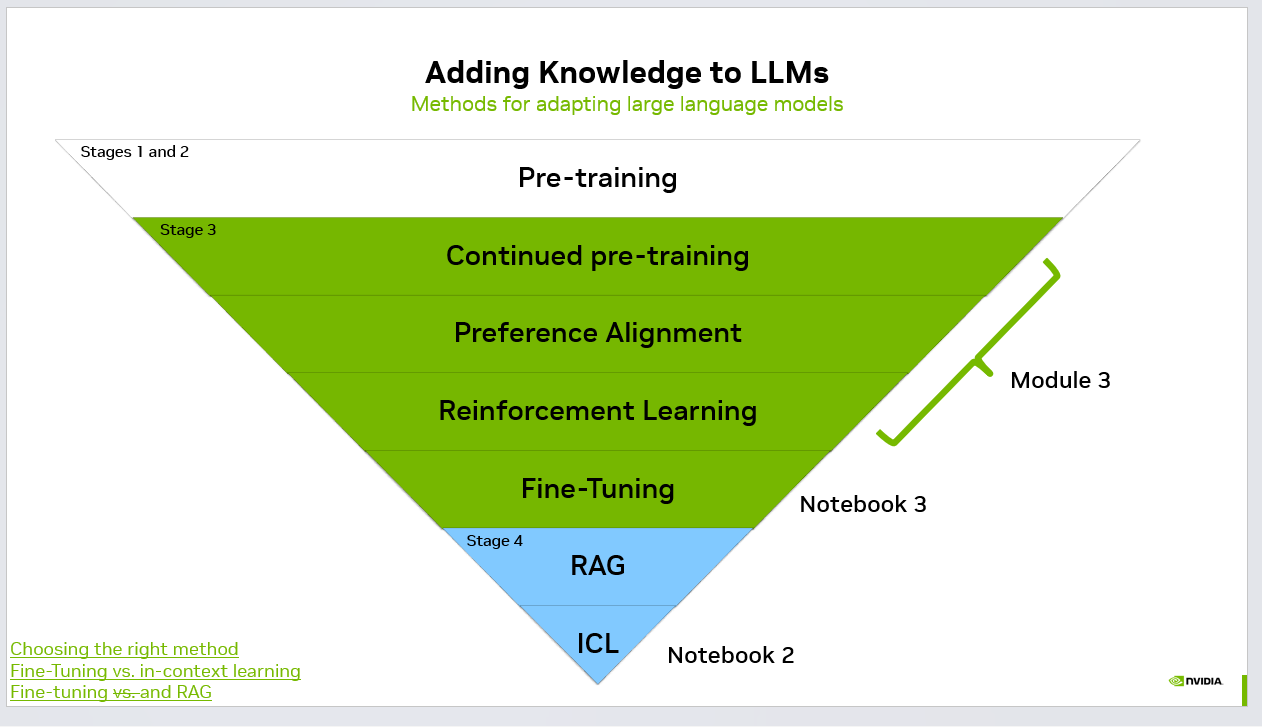

Large Language Models (LLMs) are powerful because they learn patterns from massive datasets. However, real-world applications often require adding new knowledge or adapting the model to specific domains. There are several methods used in the industry to extend or specialize LLM capabilities.

1. Pre-training

Pre-training is the first stage where a model is trained on extremely large datasets such as books, websites, and code repositories. This stage teaches the model language structure, reasoning patterns, and general knowledge.

Pre-training is very expensive and typically performed only by large organizations due to the computational cost.

2. Continued Pre-training

In this stage, an already trained model is further trained on additional datasets that are more domain-specific. For example, a model can be further trained on medical literature or financial documents.

flowchart LR

INPUT(["User intent"])

PARSE["Parse plus<br/>classify"]

PLAN["Plan and tool<br/>selection"]

AGENT["Agent loop<br/>LLM plus tools"]

GUARD{"Guardrails<br/>and policy"}

EXEC["Execute and<br/>verify result"]

OBS[("Trace and metrics")]

OUT(["Outcome plus<br/>next action"])

INPUT --> PARSE --> PLAN --> AGENT --> GUARD

GUARD -->|Pass| EXEC --> OUT

GUARD -->|Fail| AGENT

AGENT --> OBS

style AGENT fill:#4f46e5,stroke:#4338ca,color:#fff

style GUARD fill:#f59e0b,stroke:#d97706,color:#1f2937

style OBS fill:#ede9fe,stroke:#7c3aed,color:#1e1b4b

style OUT fill:#059669,stroke:#047857,color:#fff

This helps the model adapt to specialized terminology and domain knowledge.

3. Preference Alignment

Preference alignment ensures the model behaves in a helpful and safe way. Human feedback or AI-generated feedback is used to guide the model toward preferred responses.

This stage improves:

Helpfulness

Safety

Try Live Demo →Try Live →Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

Tone and behavior

4. Reinforcement Learning

Reinforcement learning (often RLHF – Reinforcement Learning from Human Feedback) optimizes model responses using reward signals.

Instead of simply predicting the next word, the model learns which responses are considered better based on feedback.

5. Fine-Tuning

Fine-tuning trains the model on a smaller curated dataset to specialize it for a particular task such as:

Customer support

Legal document analysis

Code generation

Fine-tuning modifies the model weights and permanently changes the model behavior.

6. Retrieval-Augmented Generation (RAG)

RAG allows the model to retrieve information from external databases or knowledge bases before generating an answer.

Instead of storing all knowledge in the model weights, the model fetches relevant documents in real time.

Advantages:

Knowledge can be updated without retraining

Works well with large knowledge bases

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Reduces hallucinations

7. In-Context Learning (ICL)

In-context learning means providing examples directly in the prompt. The model learns the pattern from those examples and generates a response accordingly.

For example, providing a few examples of question-answer pairs can guide the model to produce similar responses.

This method requires no training and works instantly.

Choosing the Right Method

Each method has different trade-offs:

Pre-training: Most powerful but extremely expensive

Continued pre-training: Useful for domain adaptation

Fine-tuning: Good for task specialization

RAG: Best for dynamic knowledge

ICL: Fast and simple

In modern AI systems, these techniques are often combined. For example, a system may use a fine-tuned model together with RAG and prompt engineering to deliver accurate and up-to-date results.

Understanding when to use each approach is critical for building scalable AI applications.

#AI #LLM #ArtificialIntelligence #MachineLearning #RAG #FineTuning #GenAI

## How Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know — operator perspective Practitioners building how Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know keep rediscovering the same trade-off: more autonomy means more surface area for things to go wrong. The art is giving the agent enough room to be useful without giving it room to spiral. That contract is what separates a demo from a production system. CallSphere learned this the expensive way while wiring 37 specialized agents to 90+ tools across 115+ database tables — every integration that didn't enforce schemas at the tool boundary eventually paged someone. ## Why this matters for AI voice + chat agents Agentic AI in a real call center is a different beast than a single-LLM chatbot. Instead of one model answering one prompt, you orchestrate a small team: a router that decides intent, specialists that own a vertical (booking, intake, billing, escalation), and tools that read and write to the same Postgres your CRM trusts. Hand-offs are where most production bugs hide — when Agent A passes context to Agent B, anything that isn't explicit in the message gets lost, and the user feels it as the agent "forgetting." That's why the systems that hold up under load are the ones with typed tool schemas, deterministic state stored outside the conversation, and a hard ceiling on tool calls per session. The cost story is just as important: a multi-agent loop can quietly burn 10x the tokens of a single-LLM design if you let it think out loud at every step. The fix isn't a smarter model, it's smaller agents, shorter prompts, cached system messages, and evals that fail the build when p95 latency or per-session cost regresses. CallSphere runs this pattern across 6 verticals in production, and the rule has held every time: the agent you can debug in five minutes will out-survive the agent that's "smarter" on a benchmark. ## FAQs **Q: What's the hardest part of running how Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know live?** A: Scaling comes from constraint, not capability. The deployments that hold up keep each agent narrow, cap tool calls per turn, cache the system prompt, and pin a smaller model for routing while reserving the larger model for synthesis. CallSphere's stack — 37 agents · 90+ tools · 115+ DB tables · 6 verticals live — is sized that way on purpose. **Q: How do you evaluate how Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know before shipping?** A: Hard ceilings beat heuristics. A maximum step count, an idempotency key on every tool call, and a fallback to a deterministic script when confidence drops below a threshold are what keep the loop bounded. Evals that simulate noisy inputs catch the rest before they reach a real caller. **Q: Which CallSphere verticals already rely on how Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know?** A: It's already in production. Today CallSphere runs this pattern in After-Hours Escalation and Sales, alongside the other live verticals (Healthcare, Real Estate, Salon, Sales, After-Hours Escalation, IT Helpdesk). The same orchestrator code path serves voice and chat — the difference is the tool set the router exposes. ## See it live Want to see it helpdesk agents handle real traffic? Spin up a walkthrough at https://urackit.callsphere.tech or grab 20 minutes on the calendar: https://calendly.com/sagar-callsphere/new-meeting.Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.