Adding Knowledge to LLMs: Methods for Adapting Large Language Models

Adding Knowledge to LLMs: Methods for Adapting Large Language Models

Adding Knowledge to LLMs: Methods for Adapting Large Language Models

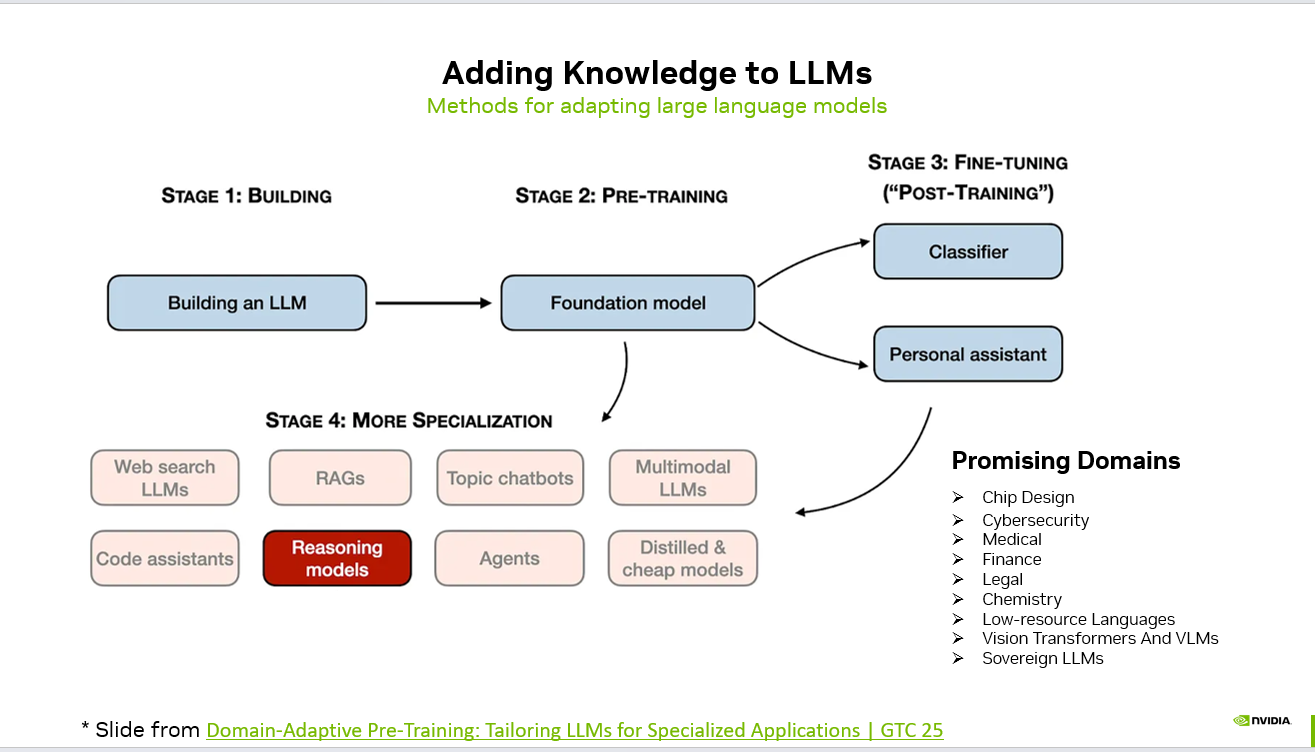

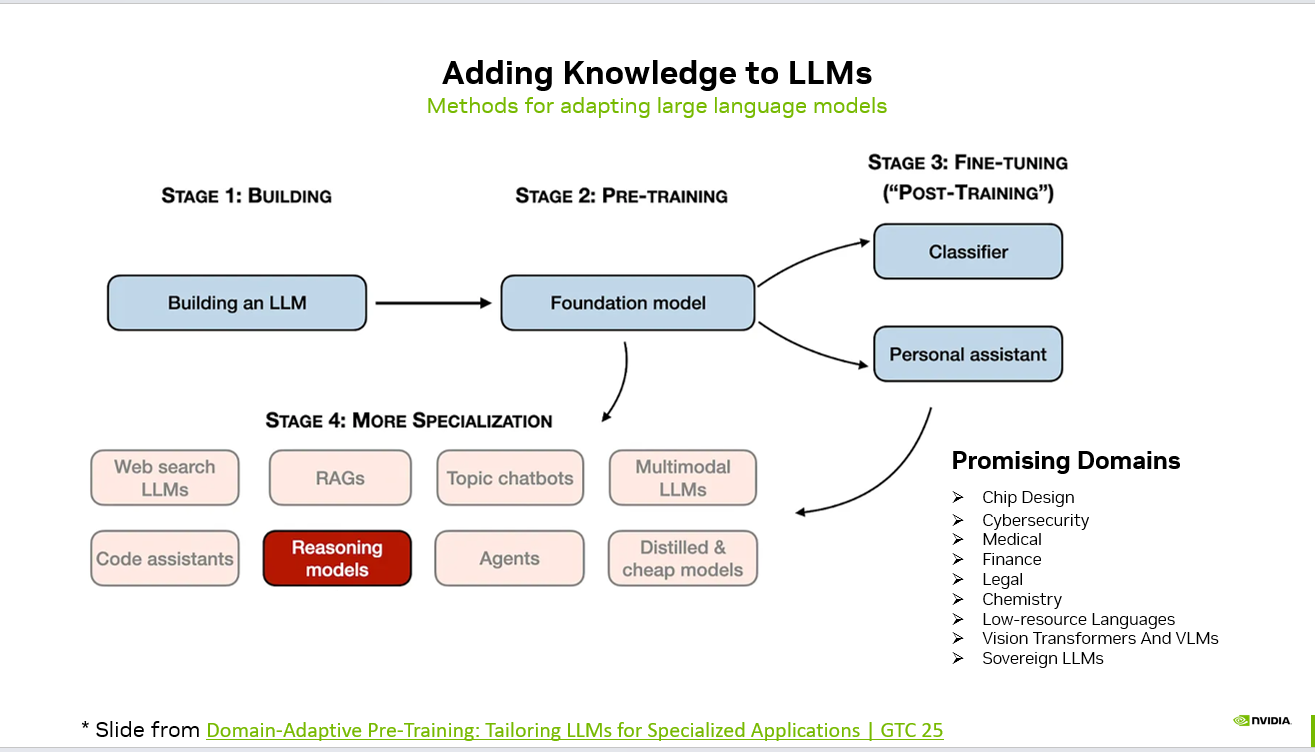

Large Language Models do not become powerful by accident. Their capabilities are the result of structured stages of development — from foundational training to domain specialization.

Understanding how knowledge is added to LLMs helps teams choose the right strategy for building production-ready AI systems.

Stage 1: Building the Model

The journey begins with constructing the base architecture — defining parameters, training infrastructure, and scaling strategy.

This stage focuses on:

Model architecture design

Tokenization strategy

Training data pipelines

Distributed training systems

The output of this stage is the technical foundation required for large-scale learning.

Stage 2: Pre-Training (Foundation Model)

Pre-training transforms the architecture into a foundation model by exposing it to massive, diverse datasets.

flowchart LR

DATA[("Curated dataset<br/>instruction or chat")]

CLEAN["Clean and dedupe<br/>PII filter"]

TOK["Tokenize and pack"]

METHOD{"Method"}

LORA["LoRA or QLoRA<br/>adapters only"]

SFT["Full SFT<br/>all params"]

DPO["DPO or RLHF<br/>preference learning"]

EVAL["Held out eval<br/>plus regression suite"]

DEPLOY[("Adapter or<br/>merged model")]

DATA --> CLEAN --> TOK --> METHOD

METHOD --> LORA --> EVAL

METHOD --> SFT --> EVAL

METHOD --> DPO --> EVAL

EVAL --> DEPLOY

style METHOD fill:#4f46e5,stroke:#4338ca,color:#fff

style EVAL fill:#f59e0b,stroke:#d97706,color:#1f2937

style DEPLOY fill:#059669,stroke:#047857,color:#fff

This phase enables the model to:

Learn language patterns

Acquire general world knowledge

Try Live Demo →Try Live →Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

Develop reasoning abilities

Understand syntax and semantics

The result is a general-purpose model capable of handling a wide variety of tasks.

Stage 3: Fine-Tuning (Post-Training)

Fine-tuning adapts the foundation model to specific applications.

Common outcomes include:

Classifiers for structured prediction tasks

Personal assistants optimized for dialogue

Instruction-following models

This stage often involves supervised fine-tuning, reinforcement learning from human feedback (RLHF), or alignment-focused optimization.

Stage 4: Advanced Specialization

Beyond fine-tuning, models can be further specialized using advanced techniques:

Retrieval-Augmented Generation (RAG)

Web-search integrated LLMs

Topic-specific chatbots

Code assistants

Reasoning-optimized models

AI agents capable of multi-step workflows

Distilled and cost-efficient models

Multimodal LLMs (text + vision)

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

This is where models evolve from general intelligence to domain expertise.

Promising Application Domains

As specialization improves, LLMs are increasingly applied in high-impact domains:

Chip design

Cybersecurity

Medical and healthcare

Finance

Legal systems

Chemistry and scientific research

Low-resource language support

Vision-language systems (VLMs)

Sovereign AI initiatives

Why This Matters

Adding knowledge to LLMs is not a single step — it is a layered process combining architecture, data, alignment, and specialization.

For AI builders, the key questions are:

Do you need broader intelligence or deeper domain expertise?

Should you fine-tune, use RAG, or build agents?

Is cost-efficiency more important than scale?

Understanding these stages allows teams to design AI systems that are not only powerful — but purpose-built.

Source: NVIDIA

#AI #MachineLearning #LLM #GenerativeAI #AIEvaluation #MLOps #AIEngineering #RAG #AIResearch #DomainAdaptation

## Adding Knowledge to LLMs: Methods for Adapting Large Language Models — operator perspective Behind Adding Knowledge to LLMs: Methods for Adapting Large Language Models sits a smaller, more useful question: which production constraint just got cheaper to solve — first-token latency, language coverage, structured outputs, or tool-call reliability? On the CallSphere side, the practical filter is simple: would this make a 90-second appointment-booking call faster, cheaper, or more reliable? If the answer is "maybe in a benchmark," it doesn't ship to production. ## Base model vs. production LLM stack — the gap that costs you uptime A base model is a checkpoint. A production LLM stack is a whole different artifact: eval gates that fail the build on regression, prompt caching that cuts repeated-system-prompt cost by 40-70%, structured outputs that prevent JSON drift on tool calls, fallback chains that route to a smaller-model retry when the primary times out, and request-side guardrails that cap tool calls per session before the loop spirals. CallSphere runs LLMs in tandem on purpose: `gpt-4o-realtime` for the live call (streaming audio in and out, tool calls inline) and `gpt-4o-mini` for post-call analytics (sentiment scoring, lead qualification, summary generation, and the lower-stakes async work that doesn't need realtime). That split is not a cost optimization — it's a reliability decision. Realtime is optimized for low-latency turn-taking; mini is optimized for cheap, deterministic batch scoring. Mixing them lets each do what it's good at without one regressing the other. The teams that struggle with LLMs in production almost always made the same mistake: they treated "the model" as a single dependency, instead of as a small portfolio of models, each pinned to a job, each behind its own eval suite, each with a documented fallback. ## FAQs **Q: Why isn't adding Knowledge to LLMs an automatic upgrade for a live call agent?** A: Most of the time it doesn't, and that's the right starting assumption. The relevant test is whether it improves at least one of: p95 first-token latency, tool-call argument accuracy on noisy inputs, multi-turn handoff stability, or per-session cost. CallSphere ships in 57+ languages, is HIPAA and SOC 2 aligned, and runs voice, chat, SMS, and WhatsApp from the same agent stack. **Q: How do you sanity-check adding Knowledge to LLMs before pinning the model version?** A: The eval gate is unsentimental — a regression suite that simulates real call traffic (noisy ASR, partial inputs, tool-call timeouts) measures four numbers, and a candidate has to win on three of four without losing badly on the fourth. Anything else is treated as a blog post, not a stack change. **Q: Where does adding Knowledge to LLMs fit in CallSphere's 37-agent setup?** A: In a CallSphere deployment, new model and API capabilities land first in the post-call analytics pipeline (lower stakes, async, easy to roll back) and only later in the live realtime path. Today the verticals most likely to absorb new capability first are Salon and Real Estate, which already run the largest share of production traffic. ## See it live Want to see after-hours escalation agents handle real traffic? Walk through https://escalation.callsphere.tech or grab 20 minutes with the founder: https://calendly.com/sagar-callsphere/new-meeting.Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.